There are two kinds of software success stories: the ones that quietly compound for a decade, and the ones that make your CFO suddenly learn what “data warehouse” means. Snowflake is firmly in category #2except it’s also doing the compounding thing.

Around the ~$4B “ARR-ish” mark (more on the “-ish” in a second), Snowflake is no longer a rocket ship trying to prove it can fly. It’s a jumbo jet trying to prove it can land, refuel, add new engines, and still serve pretzels without turbulence. And that’s where the learnings get juicybecause at this scale, the story stops being “cool tech” and starts being “repeatable machine.”

Below are five interesting learnings from Snowflake’s trajectory near the ~$4 billion annual run-rate level, written for builders, operators, and anyone who has ever uttered the phrase: “Why is our cloud bill… like that?”

Learning #1: At Snowflake, “ARR” Is a VibeConsumption Changes the Math

Let’s address the snowflake in the room: Snowflake doesn’t sell like a traditional seat-based SaaS company. A big part of its model is consumptioncustomers pay based on usage (think credits), plus committed spend and other structures. That means the classic SaaS shortcut“just look at ARR”doesn’t map perfectly.

So what do people mean by “~$4B ARR” for Snowflake?

Most folks are using “ARR” as shorthand for an annualized revenue run rate (or product revenue at scale), not strictly contractual recurring revenue in the seat-based sense. In other words: it’s a useful mental model, as long as you remember it’s not a seat-count subscription business with perfectly linear renewals.

The better operator lens: predictability plus momentum

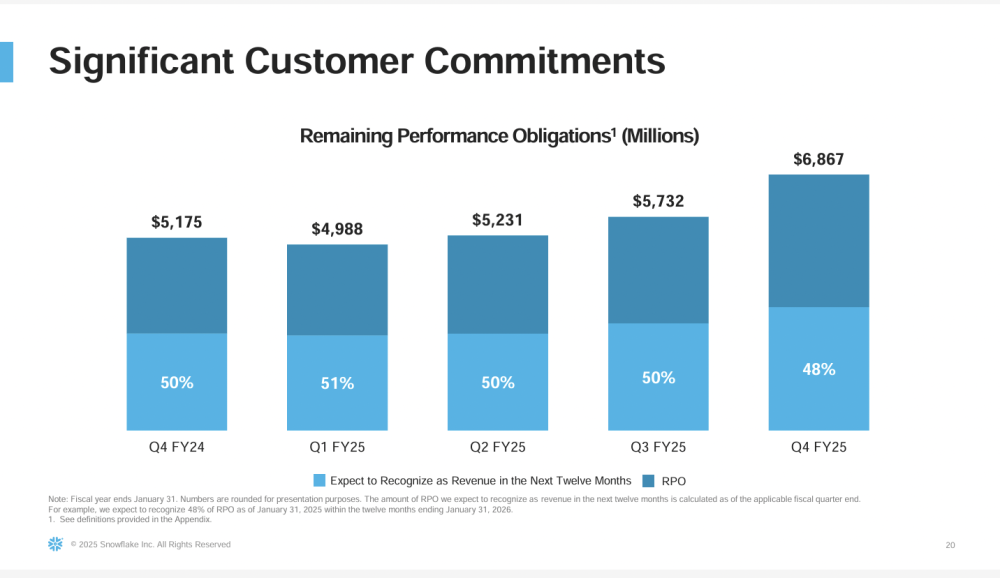

If you’re trying to understand durability in a consumption business, you look for signals like: (1) retention, (2) large customer expansion, and (3) contracted future revenue visibility. Snowflake emphasizes Remaining Performance Obligations (RPO), which is contracted future revenue not yet recognized. RPO isn’t “ARR,” but it’s a serious indicator of committed demand and forward coverage.

The real learning here: consumption businesses can be more resilient than skeptics assumeif they build a product that becomes infrastructure. When your platform becomes the place where data lives and work happens, “usage-based” stops feeling like a risk and starts feeling like the reward for being essential.

If you run a SaaS company and you’re debating pricing models, Snowflake’s scale is a reminder: consumption can work incredibly well when you’re selling a foundational layer (data + compute + governance), not a “nice-to-have” workflow tool.

Learning #2: World-Class Net Revenue Retention Still Matters at Scale (Even at 125%)

In the early hypergrowth days, SaaS Twitter acted like anything below 140% net revenue retention (NRR) was a crime. Then reality showed up with a clipboard and a macroeconomic cycle.

Snowflake’s NRR around the mid-120s is still elite at this size, and it’s arguably more impressive precisely because it’s happening at scale. When you have tens of thousands of workloads, retention isn’t powered by one department’s enthusiasmit’s powered by organizational dependence.

Why 125% NRR can be “quietly monstrous”

- It compounds: If your existing base expands meaningfully each year, new sales don’t need to do all the heavy lifting.

- It signals product breadth: Expansion usually means more workloads, more departments, and more mission-critical usagenot just one team running a few queries.

- It reduces go-to-market pressure: Your sales team can stop living in a perpetual “new logo or else” hunger games.

The “$1M+ customer” story is the practical proof

At this stage, the best retention story isn’t a percentageit’s the shape of your customer base. When a growing number of customers are contributing $1M+ in trailing product revenue, that’s not casual usage. That’s a platform becoming a line item no one wants to remove (because removing it would require replacing a lot of work).

The broader learning: at ~$4B scale, the job isn’t to chase novelty. It’s to keep earning expansion by making “more data + more workloads” genuinely easier, safer, and faster than the alternatives.

Learning #3: Efficiency Becomes a Product Feature (Not Just a Finance Slide)

Smaller companies can grow with messy operations. Bigger companies can’t. At multi-billion scale, your internal efficiency becomes part of your market identitybecause it affects pricing flexibility, innovation pace, and how hard you can press the gas without the engine catching fire.

Snowflake has emphasized healthy margins and disciplined investment while still pushing product expansion. That combo matters because it signals a mature phase: the company can fund growth from strength rather than from vibes and venture capital.

Why this matters for customers (yes, customers)

A financially durable vendor is a safer platform bet. Enterprises don’t just buy features; they buy the probability the platform will still be winning (and supported) five years from now.

Why this matters for operators (especially SaaS operators)

When you’re nearing $4B in revenue scale, the game shifts:

- Sales efficiency matters: Your CAC model has to work without heroic assumptions.

- Hiring discipline matters: Headcount growth can’t outpace the value created.

- Cost-to-serve matters: Your cloud spend, support load, and internal tooling become competitive variables.

The practical learning: “efficiency” isn’t a phase you enter when you’re tired. It’s a capability you build so you can keep building. The best companies make efficiency a flywheelbetter unit economics → more reinvestment → better product → more usage → better unit economics.

Learning #4: Platform Effects Show Up in Sharing, Marketplace, and Interoperability

A data platform isn’t just a warehouse. At scale, it becomes an ecosystem: data sharing between organizations, marketplaces for third-party datasets, and developer workflows that treat the platform like a runtime.

Snowflake has leaned into the “Data Cloud” idea for years, but the interesting learning near ~$4B scale is this: network effects become visible in the numbers and in user behaviornot just in marketing.

Data sharing and marketplace are not side quests

Sharing reduces friction. Friction is the enemy of analytics. When teams can access governed data without copying it across systems, they move faster and take fewer security risks. Marketplaces add a second-order benefit: customers can bring in external data (industry, financial, location, etc.) in a way that’s operationally sane.

The platform effect learning: once data sharing and marketplace usage becomes common, Snowflake isn’t just the place you store data. It’s the place data meets other datawithout everyone exporting CSVs like it’s 2009.

Interoperability is a strategic weapon

The modern data stack is allergic to lock-in. (Okay, it’s not allergicit’s just deeply suspicious.) That’s why interoperability matters: open table formats, multi-cloud flexibility, and “bring your tools” workflows.

Snowflake’s push around Apache Iceberg interoperability (and related catalog efforts) highlights an important learning for any platform at scale: openness is not the opposite of strategy. Openness can be the strategy that makes adoption easier, expands workloads, and reduces the fear that kills deals.

Learning #5: AI “in the data” Wins When It’s Governed, Not Gimmicky

The AI era has produced two categories of enterprise announcements: (1) “We added AI!” and (2) “We made AI usable with enterprise data, permissions, and auditing.” The second category is the one that survives procurement.

Snowflake’s AI positioning has increasingly focused on enabling organizations to run AI and analytics where the data already lives, with governance and security controls intact. That includes packaged AI features, agentic experiences, and partnerships that make leading models available through a controlled platform layer.

Why “agentic” only matters when it’s attached to real work

Enterprises don’t buy AI because it’s cool. They buy AI because it can reduce cycle time, improve decisions, and automate repetitive analysiswithout turning compliance teams into full-time firefighters.

The learning: at ~$4B scale, AI isn’t a feature checkbox. It’s a workload expansion strategy. If you can make unstructured data (documents, text, images, logs) queryable and actionable in the same governed environment as structured data, you expand consumption and deepen platform dependence.

Adjacent workloads (like observability) are a growth lever

Another interesting signal is Snowflake’s move to bring observability/telemetry use cases closer to the platform. Logs and metrics are essentially data exhausthigh volume, high value, and historically expensive to manage. If you can help customers store, analyze, and act on that telemetry efficiently, you become even more central.

The bigger point: AI increases the value of dataand increases the pressure to manage it well. Platforms that combine performance, governance, and AI usability are positioned to capture that value.

So, What Should You Take Away?

If you zoom out, Snowflake near the ~$4B run-rate level teaches a simple set of operator truths:

- Consumption can scale when the product becomes infrastructure and customers grow into it.

- Retention is still kingand 125% NRR at scale is a compounding machine, not “meh.”

- Efficiency is a moat because it funds innovation and reduces platform risk for customers.

- Platform effects are real when sharing, marketplace, and interoperability lower friction.

- AI becomes durable when it’s governed, embedded in workflows, and attached to real data.

In other words: Snowflake didn’t get here by being a single product. It got here by becoming a default place where data teams buildand then by expanding what “build” means.

Experience: 5 Field Notes from Living in the Snowflake Universe (About )

To make these learnings feel less like an investor deck and more like real life, here’s what the Snowflake journey tends to look like from the insidebased on the kinds of patterns operators run into when a company adopts a consumption data platform at scale.

1) The “first query is free” illusion is adorable (and temporary)

The early days are magical. Analysts run queries and dashboards load faster. People start saying things like, “Wait… I can join these tables without my laptop sounding like a leaf blower?” Then the first big billing cycle hits, and suddenly everyone discovers the concept of FinOps.

The fix isn’t panicit’s governance. You set resource monitors, tag workloads, separate dev/test/prod, and teach teams that “SELECT *” is not a personality trait. Once cost visibility becomes a habit, the consumption model stops being scary and starts being empowering: teams spend where there’s value and optimize where there isn’t.

2) The real adoption moment is when non-data people stop asking for extracts

A surprising milestone is not “we migrated the warehouse.” It’s when marketing, finance, or operations stops requesting CSV exports “just for this one thing.” That’s when the platform becomes trusted enough that people will work inside it (or through tools connected to it) instead of pulling data out and making shadow copies.

This is also where the data team’s reputation improves. You go from “the team that says no” to “the team that makes answers show up faster than meetings.”

3) Security teams relax when governance is designed, not bolted on

The fastest way to destroy momentum is to treat security like a late-stage patch. The fastest way to accelerate momentum is to make governance part of the architecture: role-based access, masking policies, auditing, and clear data ownership.

When that’s in place, the business can move quickly without opening a compliance-themed horror show. And that unlocks more workloadsbecause people trust the environment enough to put important things in it.

4) Data sharing feels like a superpower the first time you use it correctly

The first time you share governed data across teams (or even across organizations) without copying it into five new systems, it genuinely feels like cheating. Suddenly “single source of truth” becomes a little less of a slogan and a little more of an operational reality.

That’s also where platform effects start to feel tangible: fewer copies, fewer stale dashboards, fewer arguments about which file is “final_final_v7.xlsx.”

5) AI features are useful only after you do the unglamorous work

Everyone wants AI on day one. But AI is like a sports car: if your roads are full of potholes, you’ll just crash faster. The unglamorous workdata quality, documentation, lineage, permissionsturns “AI demos” into “AI workflows.”

Once that foundation exists, AI can actually help. Summarizing support tickets, extracting entities from documents, or enabling natural-language exploration becomes valuable because it’s connected to governed data. That’s the moment where AI stops being theater and starts being leverage.

Put it all together, and the lived experience matches the business learnings: Snowflake wins when it becomes essential, expands workloads responsibly, and keeps the platform trustworthytechnically, financially, and operationally.